2026

Rethinking Agentic Search with Pi-Serini: Is Lexical Retrieval Sufficient?

Tz-Huan Hsu, Jheng-Hong Yang, Jimmy Lin

arXiv preprint arXiv:2605.10848 2026

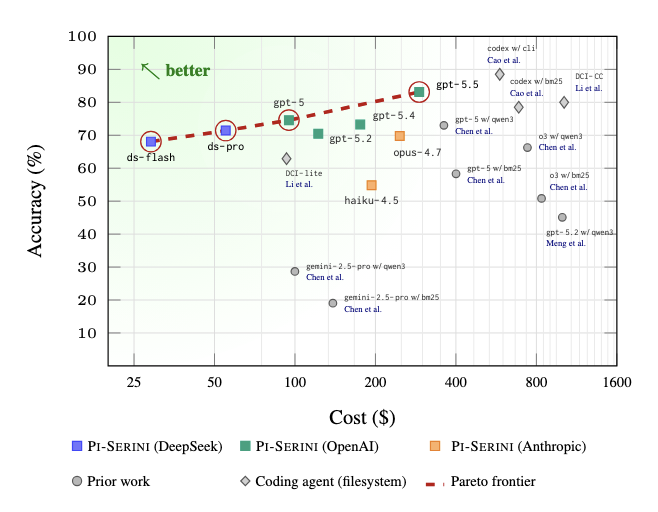

Does a lexical retriever suffice as large language models (LLMs) become more capable in an agentic loop? This question naturally arises when building deep research systems. We revisit it by pairing BM25 with frontier LLMs that have better reasoning and tool...

2025

Gosling Grows Up: Retrieval with Learned Dense and Sparse Representations Using Anserini

Jimmy Lin, Arthur Haonan Chen, Carlos Lassance, Xueguang Ma, Ronak Pradeep, Tommaso Teofili, Jasper Xian, Jheng-Hong Yang, Brayden Zhong, Vincent Zhong

Proceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval 2025

The Anserini IR toolkit has come a long way since efforts began in 2015. Although the goals of the project - to bridge research and practice in information retrieval, and to provide reproducible, easy-to-use baselines - have remained constant, the world has...

2024

Retrieval Evaluation for Long-Form and Knowledge-Intensive Image–Text Article Composition

Jheng-Hong Yang, Carlos Lassance, Rafael S Rezende, Krishna Srinivasan, Stéphane Clinchant, Jimmy Lin

Proceedings of the First Workshop on Advancing Natural Language Processing for Wikipedia 2024

This paper examines the integration of images into Wikipedia articles by evaluating image–text retrieval tasks in multimedia content creation, focusing on developing retrieval-augmented tools to enhance the creation of high-quality multimedia articles. Desp...

Toward Automatic Relevance Judgment Using Vision–Language Models for Image–Text Retrieval Evaluation

Jheng-Hong Yang, Jimmy Lin

arXiv preprint arXiv:2408.01363 2024

Vision–Language Models (VLMs) have demonstrated success across diverse applications, yet their potential to assist in relevance judgments remains uncertain. This paper assesses the relevance estimation capabilities of VLMs, including CLIP, LLaVA, and GPT-4V...

Resources for Brewing BEIR: Reproducible Reference Models and Statistical Analyses

Ehsan Kamalloo, Nandan Thakur, Carlos Lassance, Xueguang Ma, Jheng-Hong Yang, Jimmy Lin

Proceedings of the 47th International ACM SIGIR Conference on Research and Development in Information Retrieval 2024

BEIR is a benchmark dataset originally designed for zero-shot evaluation of retrieval models across 18 different domain/task combinations. In recent years, we have witnessed the growing popularity of models based on representation learning, which naturally ...

2023

One Blade for One Purpose: Advancing Math Information Retrieval Using Hybrid Search

Wei Zhong, Sheng-Chieh Lin, Jheng-Hong Yang, Jimmy Lin

Proceedings of the 46th international ACM SIGIR conference on research and development in information retrieval 2023

Neural retrievers have been shown to be effective for math-aware search. Their ability to cope with math symbol mismatches, to represent highly contextualized semantics, and to learn effective representations are critical to improving math information retri...

AToMiC: An Image/Text Retrieval Test Collection to Support Multimedia Content Creation

Jheng-Hong Yang, Carlos Lassance, Rafael Sampaio De Rezende, Krishna Srinivasan, Miriam Redi, Stéphane Clinchant, Jimmy Lin

Proceedings of the 46th International ACM SIGIR conference on research and development in information retrieval 2023

This paper presents the AToMiC (Authoring Tools for Multi media Content) dataset, designed to advance research in image/text cross-modal retrieval. While vision–language pretrained transformers have led to significant improvements in retrieval effectiveness...

Simple Yet Effective Neural Ranking and Reranking Baselines for Cross-Lingual Information Retrieval

Jimmy Lin, David Alfonso-Hermelo, Vitor Jeronymo, Ehsan Kamalloo, Carlos Lassance, Rodrigo Nogueira, Odunayo Ogundepo, Mehdi Rezagholizadeh, Nandan Thakur, Jheng-Hong Yang, Xinyu Zhang

arXiv preprint arXiv:2304.01019 2023

The advent of multilingual language models has generated a resurgence of interest in cross-lingual information retrieval (CLIR), which is the task of searching documents in one language with queries from another. However, the rapid pace of progress has led ...

TREC 2023 AToMiC Overview

Jheng-Hong Yang, Carlos Lassance, Krishna Srinivasan, Miriam Redi, Stéphane Clinchant, Jimmy Lin

Text REtrieval Conference 2023

This paper presents an exploration of evaluating image–text retrieval tasks designed for multimedia content creation, with a particular focus on the dynamic interplay among various modalities, including text and images. The study highlights the pivotal role...

TREC 2023-H2Oloo in the Product Search Challenge

Jheng-Hong Yang, Jimmy Lin

Text REtrieval Conference 2023

This publication page is generated from bibliography/papers.bib.Edit the BibTeX entry and run uv run python scripts/generate_publications.py to update it.

2022

Evaluating Token-Level and Passage-Level Dense Retrieval Models for Math Information Retrieval

Wei Zhong, Jheng-Hong Yang, Yuqing Xie, Jimmy Lin

Findings of the Association for Computational Linguistics: EMNLP 2022 2022

With the recent success of dense retrieval methods based on bi-encoders, studies have applied this approach to various interesting downstream retrieval tasks with good efficiency and in-domain effectiveness. Recently, we have also seen the presence of dense...

Multiperiod Corporate Default Prediction Through Neural Parametric Family Learning

Wei-Lun Luo, Yu-Ming Lu, Jheng-Hong Yang, Jin-Chuan Duan, Chuan-Ju Wang

Proceedings of the 2022 SIAM International Conference on Data Mining (SDM) 2022

Default analysis plays an essential role in financial markets because it narrows the information gap between borrowers and lenders. Of late, machine learning-based methods have found their way to default analysis and typically view it as a risk classificati...

2021

Sparsifying Sparse Representations for Passage Retrieval by Top-k Masking

Jheng-Hong Yang, Xueguang Ma, Jimmy Lin

arXiv preprint arXiv:2112.09628 2021

Sparse lexical representation learning has demonstrated much progress in improving passage retrieval effectiveness in recent models such as DeepImpact, uniCOIL, and SPLADE. This paper describes a straightforward yet effective approach for sparsifying lexica...

Contextualized Query Embeddings for Conversational Search

Sheng-Chieh Lin, Jheng-Hong Yang, Jimmy Lin

Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing 2021

This paper describes a compact and effective model for low-latency passage retrieval in conversational search based on learned dense representations. Prior to our work, the state-of-the-art approach uses a multi-stage pipeline comprising conversational quer...

Multi-Stage Conversational Passage Retrieval: An Approach to Fusing Term Importance Estimation and Neural Query Rewriting

Sheng-Chieh Lin, Jheng-Hong Yang, Rodrigo Nogueira, Ming-Feng Tsai, Chuan-Ju Wang, Jimmy Lin

ACM Transactions on Information Systems (TOIS) 2021

Conversational search plays a vital role in conversational information seeking. As queries in information seeking dialogues are ambiguous for traditional ad hoc information retrieval (IR) systems due to the coreference and omission resolution problems inher...

In-Batch Negatives for Knowledge Distillation with Tightly-Coupled Teachers for Dense Retrieval

Sheng-Chieh Lin, Jheng-Hong Yang, Jimmy Lin

Proceedings of the 6th Workshop on Representation Learning for NLP (RepL4NLP-2021) 2021

We present an efficient training approach to text retrieval with dense representations that applies knowledge distillation using the ColBERT late-interaction ranking model. Specifically, we propose to transfer the knowledge from a bi-encoder teacher to a st...

Chatty Goose: A Python Framework for Conversational Search

Edwin Zhang, Sheng-Chieh Lin, Jheng-Hong Yang, Ronak Pradeep, Rodrigo Nogueira, Jimmy Lin

Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval 2021

Chatty Goose is an open-source Python conversational search framework that provides strong, reproducible reranking pipelines built on recent advances in neural models. The framework comprises extensible modular components that integrate with popular librari...

Pyserini: A Python Toolkit for Reproducible Information Retrieval Research with Sparse and Dense Representations

Jimmy Lin, Xueguang Ma, Sheng-Chieh Lin, Jheng-Hong Yang, Ronak Pradeep, Rodrigo Nogueira

Proceedings of the 44th international ACM SIGIR conference on research and development in information retrieval 2021

Pyserini is a Python toolkit for reproducible information retrieval research with sparse and dense representations. It aims to provide effective, reproducible, and easy-to-use first-stage retrieval in a multi-stage ranking architecture. Our toolkit is self-...

Text-to-Text Multi-View Learning for Passage Re-Ranking

Jia-Huei Ju, Jheng-Hong Yang, Chuan-Ju Wang

Proceedings of the 44th international ACM SIGIR conference on research and development in information retrieval 2021

Recently, much progress in natural language processing has been driven by deep contextualized representations pretrained on large corpora. Typically, the fine-tuning on these pretrained models for a specific downstream task is based on single-view learning,...

Efficiently Teaching an Effective Dense Retriever with Balanced Topic-Aware Sampling

Sebastian Hofstätter, Sheng-Chieh Lin, Jheng-Hong Yang, Jimmy Lin, Allan Hanbury

Proceedings of the 44th international ACM SIGIR conference on research and development in information retrieval 2021

A vital step towards the widespread adoption of neural retrieval models is their resource efficiency throughout the training, indexing and query workflows. The neural IR community made great advancements in training effective dual-encoder dense retrieval (D...

2020

Designing Templates for Eliciting Commonsense Knowledge from Pretrained Sequence-to-Sequence Models

Jheng-Hong Yang, Sheng-Chieh Lin, Rodrigo Nogueira, Ming-Feng Tsai, Chuan-Ju Wang, Jimmy Lin

Proceedings of the 28th International Conference on Computational Linguistics 2020

While internalized “implicit knowledge” in pretrained transformers has led to fruitful progress in many natural language understanding tasks, how to most effectively elicit such knowledge remains an open question. Based on the text-to-text transfer transfor...

Distilling Dense Representations for Ranking Using Tightly-Coupled Teachers

Sheng-Chieh Lin, Jheng-Hong Yang, Jimmy Lin

arXiv preprint arXiv:2010.11386 2020

We present an approach to ranking with dense representations that applies knowledge distillation to improve the recently proposed late-interaction ColBERT model. Specifically, we distill the knowledge from ColBERT’s expressive MaxSim operator for computing ...

Conversational Question Reformulation via Sequence-to-Sequence Architectures and Pretrained Language Models

Sheng-Chieh Lin, Jheng-Hong Yang, Rodrigo Nogueira, Ming-Feng Tsai, Chuan-Ju Wang, Jimmy Lin

arXiv preprint arXiv:2004.01909 2020

This paper presents an empirical study of conversational question reformulation (CQR) with sequence-to-sequence architectures and pretrained language models (PLMs). We leverage PLMs to address the strong token-to-token independence assumption made in the co...

Tackling WinoGrande Schemas

Sheng-Chieh Lin, Jheng-Hong Yang, Rodrigo Nogueira, Ming-Feng Tsai, Chuan-Ju Wang, Jimmy Lin

arXiv preprint arXiv:2003.08380 2020

We applied the T5 sequence-to-sequence model to tackle the AI2 WinoGrande Challenge by decomposing each example into two input text strings, each containing a hypothesis, and using the probabilities assigned to the “entailment” token as a score of the hypot...

TREC 2020 Notebook: CAsT Track

Sheng-Chieh Lin, Jheng-Hong Yang, Jimmy Lin

TREC 2020

This notebook describes our participation (h2oloo) in TREC CAsT 2020. We first illustrate our multi-stage pipeline for conversational search: sequence-to-sequence query reformulation followed by an ad hoc text ranking pipeline; then, detail our proposed met...

Query Reformulation Using Query History for Passage Retrieval in Conversational Search

Sheng-Chieh Lin, Jheng-Hong Yang, Rodrigo Nogueira, Ming-Feng Tsai, Chuan-Ju Wang, Jimmy Lin

arXiv preprint arXiv:2005.02230 2020

Passage retrieval in a conversational context is essential for many downstream applications; it is however extremely challenging due to limited data resources. To address this problem, we present an effective multi-stage pipeline for passage ranking in conv...

2019

Query and Answer Expansion from Conversation History

Jheng-Hong Yang, Sheng-Chieh Lin, Chuan-Ju Wang, Jimmy Lin, Ming-Feng Tsai

TREC 2019

In this paper, we present our methods, experimental analysis, and final submissions for the Conversational Assistance Track (CAsT) at TREC 2019. In addition to language understanding, extracting knowledge from historical dialogues (eg, previous queries, sea...

2018

HOP-Rec: High-Order Proximity for Implicit Recommendation

Jheng-Hong Yang, Chih-Ming Chen, Chuan-Ju Wang, Ming-Feng Tsai

Best Paper Runner-Up

Proceedings of the 12th ACM conference on recommender systems 2018

Recommender systems are vital ingredients for many e-commerce services. In the literature, two of the most popular approaches are based on factorization and graph-based models; the former approach captures user preferences by factorizing the observed direct...